| AI Search | 26 min read

How AI Is Changing Search Algorithms and SEO Visibility

Learn how AI changes search algorithms, organic visibility, AI Overviews, zero-click behavior, and SEO strategy so your team can adapt.

AI has made search visibility harder to predict, and this explainer shows how AI is reshaping search algorithms, SEO visibility, rankings, clicks, and content strategy. SEO managers, content strategists, agency operators, and content operations leaders now face a harder tradeoff between classic SERP performance and visibility inside AI answers. AI search uses models to interpret intent, retrieve relevant evidence, and generate or rank answers across search surfaces. The payoff is a practical view of what changes, what still works, and where content workflows need tighter proof.

Coverage includes semantic search, AI Overviews, zero click benchmarks, and GEO workflows for cited inclusion. It also turns the concepts into usable actions, including a visibility scorecard, a cited source sprint, and page level evidence checks. Examples show why a page can keep impressions while clicks fall when an AI Overview resolves the basic query.

For teams managing multiple brands, clients, or locations, the value is knowing which signals to protect, which pages to package for citation, and which metrics to report beyond rankings. A five page cited source sprint shows how existing ranking assets can be rebuilt with lead answers, provenance, schema, and clearer internal links. Use the framework to adapt SEO strategy for both traditional results and AI search with less guesswork.

AI Search and SEO Visibility Key Takeaways

- AI search rewards intent coverage, semantic clarity, topical authority, and extractable evidence.

- Rankings still matter, but citations and answer inclusion now shape SEO visibility.

- AI Overviews can reduce clicks while increasing impressions and brand exposure.

- RAG favors pages with clear claims, supporting evidence, and crawlable context.

- Zero click impact varies by query intent, SERP features, and brand trust.

- GEO extends SEO by packaging answers for citation, verification, and reuse.

- Measure rankings, AI citation share, click-through rate, share of voice, and assisted conversions.

How Did AI Move Search Beyond Keywords?

Artificial intelligence (AI) did not end traditional search. It changed what you need to prove. Search engine optimization (SEO) fundamentals still matter from crawlability to useful links. Isolated keywords are no longer enough across traditional results and generative answer experiences.

Search moved through four broad eras:

- Boolean matching: Searchers used operators and exact phrasing to find documents.

- Keyword and link ranking: Engines weighed term matches, backlinks, and early relevance signals.

- Neural interpretation: RankBrain-era systems in the 2010s helped Google connect unfamiliar queries to related ideas.

- Generative answers: Between 2024 and April 2026, AI search started synthesizing responses from indexed content rather than only listing blue links.

For SEO leaders, the Impact of AI on Search Algorithms shows up in where visibility happens. Rankings still matter. But Artificial Intelligence in Search spreads discovery across AI Overviews, featured snippets, knowledge panels, brand mentions, and summarized answers.

The old playbook has not vanished, but the stronger signals have changed:

| Older SEO focus | AI-era visibility signal |

|---|---|

| Exact-match terms | Semantic clarity |

| Keyword density | Complete answer coverage |

| Backlink counts | Topical authority and trust |

| First-position clicks | Presence across answer surfaces |

| Single-page targeting | Connected content clusters |

Intent became central because AI systems estimate meaning before choosing an answer format. Search intent describes the goal behind the query. User Intent goes wider and may reflect:

- Past searches

- On-site behavior

- Location

- Device type

- Preferences

- Session history

Conversational Search also changed the user experience. Traditional search often works best with short, precise phrases. AI-enhanced systems can respond to vague, layered, or follow-up questions with more context. Nielsen Norman Group research available by April 2026 notes that generative AI is reshaping behavior, but conventional search has not disappeared.

The scale makes small interpretation shifts expensive. As of April 2026, common public estimates place Google at roughly:

- 5.9 million searches per minute

- 354 million searches per hour

- 8.5 billion searches per day

- 3 trillion searches per year

At Floyi, we see AI and SEO meeting where systems choose which source to cite, summarize, or ignore. To optimize for AI search, move beyond page-by-page keyword targeting and strengthen the content system around:

- Structured topical maps

- Intent-matched clusters

- Clear entity relationships

- Modular answers

- Steady content updates

These signals help AI Search Algorithms identify who the content serves, what problem it solves, and why it earns trust.

How Do Modern AI Search Algorithms Work?

Modern AI search is a layered ranking system, not a keyword-matching machine. AI Search Algorithms combine machine learning, Natural Language Processing (NLP), deep learning, neural networks, Semantic Search, and feedback signals to read the query, gather likely answers, score relevance, and adjust the result order over time.

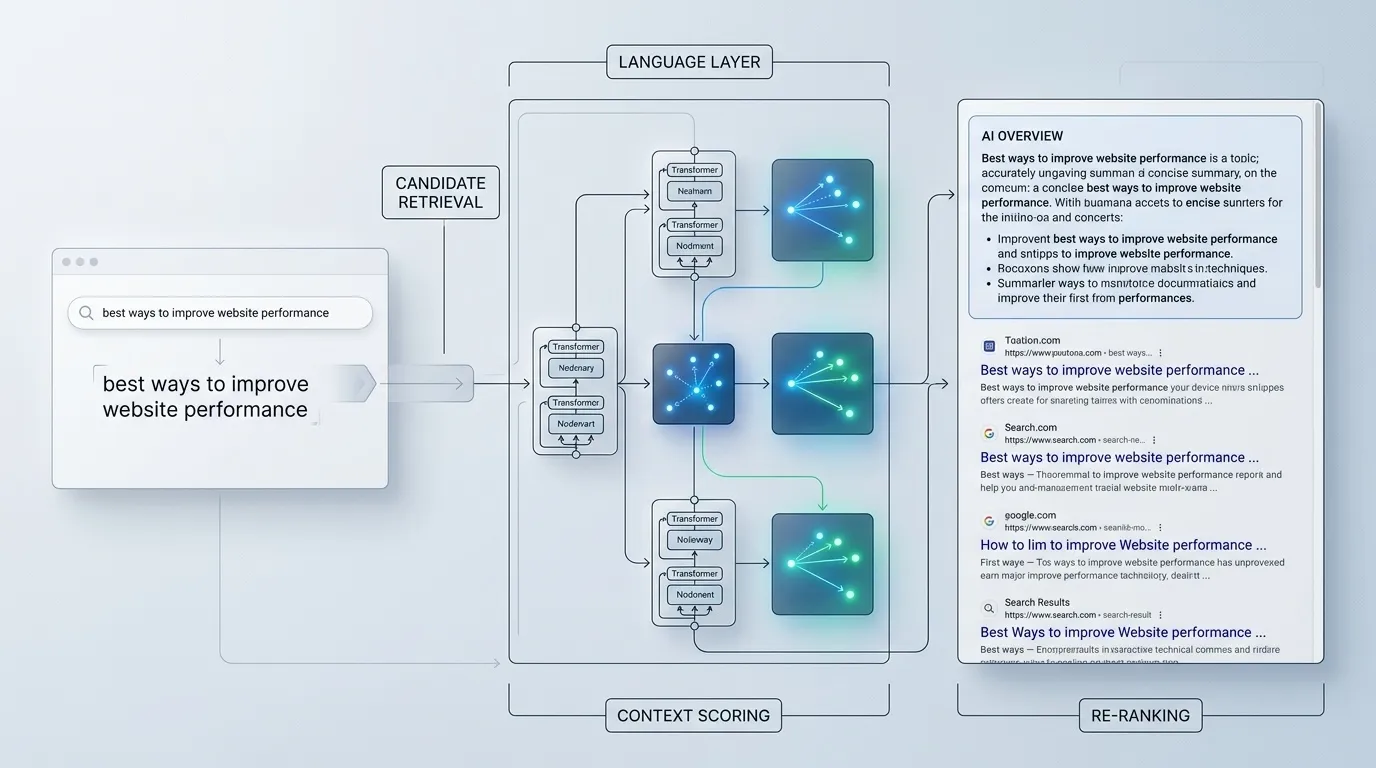

The language layer is where Search Engine Algorithms become less literal. Instead of treating a query as loose words, the system estimates the searcher’s goal and the type of answer that would finish the task.

Several model families pushed that shift forward:

- RankBrain: Connects unfamiliar or messy queries to learned patterns from similar searches.

- Bidirectional Encoder Representations from Transformers (BERT): Reads words in context, so word order, modifiers, and small terms can change the meaning.

- Multitask Unified Model: Extends language analysis across formats and languages, which helps systems connect text, images, topics, and related sub-questions.

Meaning is the bridge between wording and intent. A page can rank without repeating the exact phrase when it handles the right entity, answers the deeper need, and fits the search context better than a page built around term matching alone.

That changes what strong content needs to do. The best pages make relationships clear across the full topic set:

- Core entities and related concepts

- Subtopics that support the main intent

- Use cases that match real search behavior

- Decision points that help the reader move forward

The ranking process usually moves through three layers:

- Candidate retrieval: The engine gathers documents that may answer the query.

- Context scoring: The system evaluates relevance, freshness, authority, location, device, and likely intent.

- Re-ranking: Learned models reorder results when signal patterns suggest stronger usefulness, click-through rate, or satisfaction.

Behavioral signals add another learning loop. Clicks, dwell time, pogo-sticking, repeat searches, query refinements, and long-click patterns can hint at whether a result helped. Still, no single engagement metric acts like a fixed ranking switch.

Rankings move because the inputs keep changing. New pages enter the index, search demand shifts, user behavior evolves, and context changes by audience, location, device, and intent variant.

For SEO teams, static rank tracking still has value. Durable visibility depends more on intent coverage, topical authority, information gain, and satisfaction signals than on repeating a target phrase.

Embeddings and retrieval show how machines represent meaning and find candidate documents. Retrieval-augmented generation shows how AI answer systems select sources and synthesize responses for AI Overviews and broader search visibility.

How Do Embeddings And Retrieval Work?

Embeddings convert queries, words, entities, and pages into numerical vectors in a high-dimensional semantic space. That space lets search systems compare meaning patterns, not only the exact words someone typed.

Retrieval works like a semantic matching pass before final ranking:

- The search engine receives a natural-language query.

- Query Understanding turns the request into a query vector.

- The index supplies document, passage, or entity vectors for comparison.

- Similarity scoring checks vector closeness through distance or dot-product-style logic.

- Ranking systems refine the candidate set with metadata, links, quality signals, and context.

This is where Semantic Search changes the SEO playbook. “Average auto repair price” can sit near a page about the “cost to fix a vehicle.” “Dripping kitchen tap repair” can connect with content about faucet leaks and plumbing fixes even when the exact phrase is missing.

NLP, machine learning, Deep Learning, and neural networks help the system infer the task behind the words. A query like “best way to stop a leaking tap” signals a how-to plumbing problem. Instructional content is a stronger match than a generic product page.

Embeddings don’t replace the traditional index. They enhance it. The index still stores documents, passages, metadata, links, and other search signals. Vector retrieval adds a semantic layer that broadens candidates, clarifies entity meaning, and improves relevance before final ranking.

For Machine Learning SEO, the practical move is to write around the full problem:

- Answer the user’s task clearly.

- Name the entities that shape meaning.

- Use related terms and natural phrasing.

- Avoid repeating one exact-match keyword as the main strategy.

That gives semantic retrieval more ways to match your page to adjacent phrasing, synonyms, and related concepts.

How Does RAG Generate AI Answers?

Retrieval-Augmented Generation (RAG) bridges search retrieval and generated answers. Instead of asking a model to respond from memory, the system gathers relevant passages from the web and uses that context to shape the AI answer on the Search Engine Results Page (SERP).

The retrieval-to-generation pipeline usually moves through these steps:

- Interpret the query and infer the searcher’s intent.

- Convert the query and candidate documents into embeddings, which are numerical signals for meaning.

- Retrieve passages that appear likely to answer the query.

- Rank those passages with quality, relevance, and trust signals.

- Extract facts that directly support the answer.

- Send the grounded facts into a generative model.

- Produce a concise response that may appear inside AI-Generated Search Results, including an AI Overview.

That shift explains why Generative AI Search can do more than return ten blue links. When the system has enough confidence, it can combine evidence into a direct response.

AI Overviews are not one-page summaries. They can blend support from official websites and publishers, then add perspective from forums like Reddit or review platforms like Yelp. The overview sits above the retrieved documents as a synthesis layer, not as a replacement for the open web.

Trust depends on which sources get selected and how clearly the answer is grounded in evidence. Google’s AI Overview materials describe an experience that uses core ranking systems, aims to show reliable information, and links back to supporting web content. For SEO teams, that makes evidence structure a visibility issue.

Pages become easier for RAG systems to use when they provide:

- Clear claims that match specific search intents

- Crawlable support sections with enough context

- Strong topical relevance between the page, passage, and query

- Evidence that can be quoted, summarized, or cited with confidence

- Traceable facts tied to URLs, anchors, dates, datasets, and checksums

Query complexity changes the output. A fast definition may rely on a small group of trusted pages and return a short answer above organic results. A complex comparison may pull from more sources, weigh different views, and create a multi-part answer that once required several searches.

Search Generative Experience, or SGE, made this visibility shift easier to spot. Ranking a full page still matters, but passage-level evidence now matters too. Brands can lose clicks when the overview satisfies the query, yet they can gain visibility when their content is cited, summarized, or treated as the trusted source behind the response.

How Does AI Improve Search Relevance?

AI improves relevance by moving search from exact word matching to intent matching. That shift matters most for long-tail, conversational, and layered queries where one search carries several needs at once. Instead of splitting the task into several searches, you can ask in natural language and still get results that match the full goal.

In SEO terms, Query Understanding means the engine reads the shape of the query, not just the words inside it. Ranking systems look for clues that point to the real task behind the search:

- Query structure that signals comparison, urgency, research, or action

- Modifiers that narrow the answer by price, size, audience, time, or quality

- Entities that connect the search to people, brands, places, products, or problems

- Implied limits that the searcher doesn’t spell out but still expects the answer to respect

- Context that helps separate a broad topic from a specific need

A few examples show how much intent can fit inside one query:

- “Best lightweight laptop for travel under $1,000” combines comparison, budget, portability, and buying readiness.

- “How to fix traffic drop after site migration” points to diagnosis, technical SEO, urgency, and recovery work.

- “Family dentist open Saturday near me” blends local intent, availability, trust, and likely appointment action.

Artificial Intelligence in Search also uses personalization as another relevance layer. Two people can search the same phrase and see different results because the system may read the person, the moment, and the likely next step differently. That doesn’t make rankings random. It makes relevance more context-aware.

The practical gap sits between search intent and user intent. Search intent keeps the diagnosis at query level. User Intent adds the surrounding signals:

- Search history that shows whether someone is learning, comparing, or ready to act

- Preferences and browsing habits that shape format, depth, and source choices

- Online behavior and purchase patterns that hint at budget, category fit, or urgency

- Location and device type that affect whether maps, quick answers, videos, or full guides make sense

- Session context and seasonal trends that change what “best,” “near me,” or “open” should mean

Location makes this easy to see. A search for “restaurants” should surface nearby choices, cuisines, hours, ratings, and map-style results. Proximity, city, neighborhood, device, time of day, and visit readiness all help decide what belongs at the top.

AI systems keep refining relevance after results appear. Clicks, query changes, dwell time, explicit feedback, and return behavior help improve similar searches over time. Quality still matters, especially accuracy, freshness, format fit, and clarity.

For SEO teams, the move is clear: build pages around complete tasks, not isolated keywords. Strong content answers the query, supports the person behind it, and fits the context where the answer will be used.

How Do AI Overviews Change Organic Visibility?

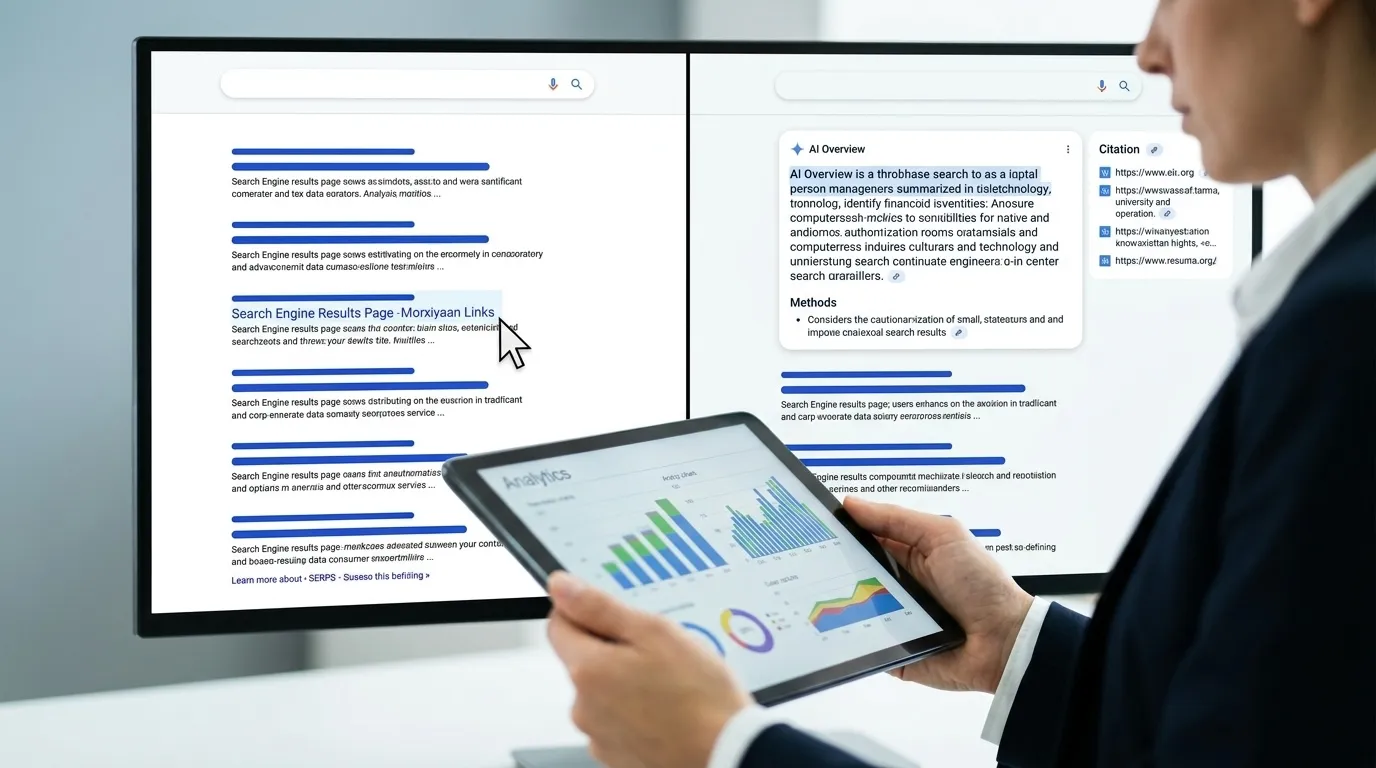

Google AI Overviews, Bing AI-powered search, ChatGPT, Gemini, Perplexity, and similar generative tools don’t erase organic visibility. They split it between classic rankings and cited inclusion inside generated answers.

That split matters because attention often moves above the blue links. An Authoritas study cited across the industry reported AI-generated summaries in roughly 30% of search results. In those moments, the top organic result can sit below a summarized answer with a small citation set.

Different search experiences shape behavior in different ways:

| Experience type | Common user behavior | Visibility impact |

|---|---|---|

| Google and Bing AI answers | Quick answers on the results page | Fewer clicks for simple fact checks |

| ChatGPT, Gemini, Google AI Mode, and Perplexity | Deeper synthesis, comparisons, and multi-step research | More value for cited sources with clear depth |

| Traditional SERP Features | Snippets, People Also Ask, local packs, videos, and shopping modules | More competition before standard links |

SEO is moving from only “rank first” to “be cited, surfaced, or used.” Search Rankings still matter, but they’re no longer the whole model. Search Generative Experience behavior, or SGE, has trained users to expect summarized answers before they choose a page.

Organic Click-Through Rates may become more uneven. Simple questions can lose casual traffic when the answer appears directly on the page. But citation clicks can carry stronger intent because the searcher wants detail, proof, or a next step.

Google’s position adds useful nuance. The company has said its AI answer systems use core ranking systems, top web results, the Knowledge Graph, and supporting links. Google also reported more than 1.5 billion global users for these experiences, as stated April 2026 or earlier, so classic quality signals still feed the citation layer.

Measurement has to reflect that change. New engagement metrics for AI search performance help teams read visibility as ranking plus citation footprint.

A practical scorecard should compare:

- Organic sessions from traditional search

- Click-through rate by query type

- AI answer presence across priority topics

- Citation share inside answer engines

- Share of voice across tracked queries and result-page features

The “AI killed search” narrative misses the real picture. SparkToro data cited by Search Engine Land says 95% of Americans still use traditional search engines monthly. The smart response is crawlable authority, clean information architecture, and extractable claims that AI systems can cite with confidence.

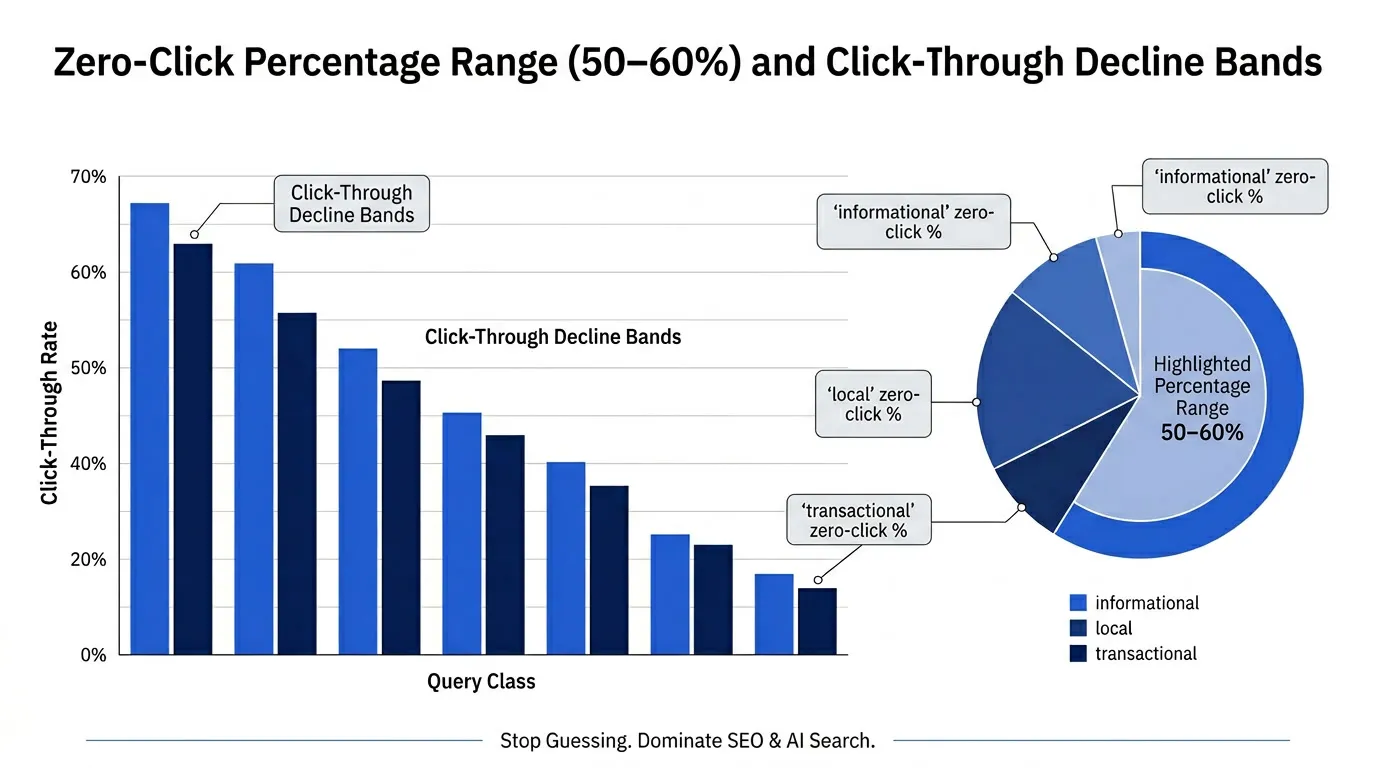

What Do Zero Click Benchmarks Show?

Major zero-click benchmarks point in the same direction, but they measure different slices of search behavior. In broad studies, close to 59% of Google searches do not send traffic to another website. For informational queries, the no-click estimate often lands closer to 50% to 60% when the answer fits directly on the Search Engine Results Page.

Zero-Click Search grows when SERP Features finish the job before a user needs a site visit. Direct answers, featured snippets, knowledge panels, and AI-generated summaries can solve quick tasks inside Google. That creates a new split for publishers: more visibility, more citations, and fewer visits than the same ranking might have produced before.

The benchmark spread makes more sense when you separate each number by scope:

| Benchmark | Scope | What it means |

|---|---|---|

| 59% zero-click searches | Broad Google behavior | Many searches across mixed intents end on the results page |

| 50% to 60% no-click estimates | Informational queries | Simple answer demand is more likely to resolve without a click |

| 18% to 64% click-through rate declines | Queries with AI Overviews | Reported impact range, not a universal traffic-loss rate |

| 30% AI Overview prevalence | Sampled SERP exposure | AI answers appeared in the sample, but that doesn’t equal traffic loss |

The 18% to 64% Organic Click-Through Rates decline range needs careful interpretation. It reflects cases where AI Overviews appear. The actual impact shifts with:

- Query intent

- Ranking position

- Brand trust

- Snippet usefulness

- Need for deeper information

Informational publishers often see the strangest pattern in Google Search Console. Impressions can stay flat or rise because pages are still ranked, summarized, or cited. Clicks fall when the AI answer satisfies enough of the intent. Pew findings cited by Nielsen Norman Group help explain why visibility can look stable while click-through rate weakens.

Local search and adoption data add more context. Search Engine Land cited Whitespark’s Q2 2025 finding that AI Overviews appeared in 68% of local searches, while local packs appeared in 39%. SparkToro’s August 2025 data found that 20% of Americans used AI tools 10 or more times monthly, while 40% used them monthly.

SEO teams should benchmark by query class rather than one headline number. Measure the full visibility chain:

- Impressions by query type

- Clicks and click-through rate

- AI-citation share

- Answer presence across priority terms

- Share of voice in traditional and AI results

- Assisted conversions from later branded or direct searches

Visibility now spans ranking, citation, answer inclusion, and delayed conversion paths. Measure the full chain before treating a traffic drop as a visibility loss.

What Content Do AI Algorithms Reward?

AI algorithms reward content that solves a specific need better than nearby competitors. That shift moves SEO away from keyword repetition and toward intent satisfaction, contextual relevance, and practical help. In Machine Learning SEO, the page that wins gives models clearer evidence to trust and cleaner structure to reuse.

E-E-A-T Content works best as a practical quality filter. Experience, expertise, authoritativeness, and trustworthiness show up in the details AI-driven systems can read across your site. At Floyi, we see experience and expertise as the difference between a page that sounds correct and a page that feels useful.

Strong quality signals tend to look like this:

- First-hand proof: Real examples, workflows, screenshots, field notes, or practitioner judgment

- Subject depth: Clear explanations that move beyond basic definitions and address edge cases

- Transparent evidence: Claims supported by credible sources, named experts, original data, or clear methods

- Brand credibility: Consistent positioning, accurate author context, and useful coverage across related pages

- Topical consistency: Connected pages that reinforce one knowledge area instead of chasing isolated keywords

Natural-language depth matters because AI search tries to predict what the searcher needs after the first answer. A strong page answers the main query, then covers implied follow-up questions and practical exceptions. Generative AI also helps users frame unclear needs, scan long pages, compare perspectives, synthesize information, and evaluate sources.

Spam resistance is part of the same quality shift. AI-powered ranking systems are better at spotting keyword stuffing, thin rewrites, low-value pages, spammy pages, and mass-produced AI content. Google said its March 2024 core update aimed to reduce low-quality, unoriginal results by 40%, and some fully AI-generated sites have been removed from the index.

That doesn’t mean content teams should avoid AI tools. It means you shouldn’t publish undifferentiated AI output without editorial judgment, evidence, topical fit, original insight, or personal experience. AI can speed up research and drafting, but humans still make content worth trusting.

Modular extractability is becoming a visibility requirement. AI systems need content they can summarize, cite, and lift without stripping away meaning. Pages become easier for both readers and models to use when they contain:

- Self-contained sections that answer one clear question

- Direct definitions that explain key terms without filler

- Concise answer blocks that make the main point easy to extract

- Step-by-step procedures that preserve sequence and context

- Comparison tables and FAQs that organize choices, tradeoffs, and recurring questions

- Evidence near each claim that reduces ambiguity

The real opportunity is differentiation inside AI-generated sameness. Add original analysis, proprietary examples, expert commentary, audience-specific nuance, and topical-map consistency. High-Quality Authoritative Content still needs backlinks, mentions, and trust signals, so depth supports authority rather than replacing the signals that prove a brand deserves visibility.

How Should SEO Teams Adapt With GEO?

GEO doesn’t replace SEO. It extends AI and SEO work from ranking in the SERP to being selected, cited, and summarized inside AI-generated answers.

The core shift is packaging. Strong SEO still protects crawlability, internal links, authority signals, page speed, indexation, and technical quality. GEO adds answer-first copy, visible evidence, stable anchors, and schema that helps machines extract claims without guessing.

Start with a focused cited-source sprint instead of trying to update every page at once. Five pages are enough to build the pattern and test the workflow:

- Choose pages that already rank, convert, or support high-value buyers.

- Prioritize intents that AI answers often summarize, including who, what, how, versus, FAQ, specs, service, and location queries.

- Compare each page against traditional results, People Also Ask, AI Overviews, cited sources, and zero-click layouts.

- Note which competitors earn citations and how they phrase evidence.

- Find pages with strong expertise but weak extractable answers or unclear provenance.

Each page needs a compact GEO package near the top. The goal isn’t to over-format content. The goal is to make strong claims easy to verify and quote:

- Add a 40 to 80 word lead answer that directly resolves the primary query.

- Show a provenance line with the author or expert team, date, and evidence anchor.

- Match claims to on-page proof, such as original data, product specs, methodology, examples, or expert review.

- Add JSON-LD schema, such as Claim, FAQPage, or HowTo, when it reflects visible page content.

- Use descriptive anchor text so internal links explain the relationship between pages.

This is where GEO strengthens topical authority. Evidence-backed topic systems give GEO a clearer source graph. A flagship guide should connect to explainers, comparisons, FAQs, product or service pages, and proof assets. We see the strongest systems when every major claim points back to clear expertise and consistent evidence.

Tools and platforms for AI search optimization should support this workflow without removing editorial control. AI-Powered SEO Tools can speed up keyword research, content gap analysis, technical checks, trend forecasting, performance tracking, and brief generation. Human review still decides accuracy, usefulness, evidence quality, and brand fit.

Measurement has to move beyond rankings alone. The Future of SEO will reward teams that track visibility across ranked links and generated answers:

| Metric | Why it matters |

|---|---|

| Answer presence | Shows whether your brand appears in AI responses |

| AI-citation share | Measures how often your pages are cited versus competitors |

| Share of voice | Tracks visibility across traditional and AI-driven surfaces |

| Click-through rate | Reveals whether SERP changes are reducing traffic |

| Assisted conversions | Connects AI-era visibility to business impact |

Set a baseline before changes go live. Review fast-moving signals weekly and deeper performance monthly. Generative AI search users grew from 13 million in 2023 toward a projected total above 90 million by 2027, so the safest plan is steady adaptation backed by evidence, monitoring, and disciplined content systems.

What Risks Come With AI Search?

AI search can make answers faster and more relevant, but it also concentrates visibility decisions inside systems you can’t fully inspect. Ranking, interpretation, personalization, and citations may be shaped by model logic that isn’t fully visible. That affects privacy, forecasting, content investment, and business decisions. Not financial advice: use these risks to stress-test SEO choices, not to make investment calls in isolation.

Personalized AI search can improve relevance in ways teams cannot fully standardize. That helps the searcher, but it fragments measurement. Floyi treats one SERP view as a signal, not a complete market read, because the same query can show different answers for different audience profiles.

The main risks tend to build on each other:

| Risk area | What can go wrong | SEO and business impact |

|---|---|---|

| Privacy | Personalization may rely on sensitive behavior or location context | Targeting assumptions become harder to validate |

| Opacity | Deep learning systems may rank or cite content for reasons teams can’t trace | Forecasting and diagnostics get less certain |

| Bias and fairness | Dominant brands, majority-language sources, or well-documented views may appear more often | Niche, local, emerging, or minority perspectives can lose visibility |

| Misinformation | AI answers may misread pages or state false claims with confidence | Brand trust can be shaped before a click happens |

| Data quality | Thin, stale, blocked, or conflicting pages can distort answers | Optimization gets harder when source material is weak |

Opacity is the everyday SEO problem. A page may appear in an AI answer because of embeddings, source selection, query reformulation, freshness, authority, user context, or generation logic. That makes transparency harder when you need to explain a traffic shift to a client or executive.

Bias also has real business consequences. MIT Sloan’s Manish Raghavan has argued that AI effects need real-world study because new harms can appear after deployment. In search, that can mean smaller publishers, local businesses, and specialized experts get underrepresented even when their content is useful.

As of April 2026, Google discloses that AI Mode can present inaccurate information with confidence, miss context, misinterpret web pages, produce odd responses for unusual searches, or reflect imbalances in available information. Competitor analysis points to the same adoption challenges: data quality, technical complexity, privacy, transparency, fairness, and algorithmic bias.

Treat AI search outputs as probabilistic signals rather than fixed rankings. A safer measurement habit looks like this:

- Compare answers across audience profiles, locations, devices, and intent variants.

- Watch for missing intents where your brand should be visible.

- Validate factual claims against primary sources before acting.

- Base budget and content decisions on patterns over time, not one AI-generated snapshot.

How Will AI Search Evolve Next?

AI search will broaden search behavior, not replace Google overnight. In research available April 2026 or earlier, SparkToro’s data on Americans’ Google use and AI tools frames the adoption split. That split is the practical lens for the Future of SEO.

The next phase is hybrid. A searcher may start in Google, scan AI Overviews, test AI Mode, ask a chatbot for options, then return to classic results for sources, prices, reviews, or local proof. The shift is less about one winner and more about assisted discovery across several interfaces.

Search behavior will keep splitting by task type:

- Fast facts: Classic results still fit simple lookups and familiar queries.

- Research starts: Generative AI Search helps when people need comparison, synthesis, or a first draft of their thinking.

- Local decisions: Maps, reviews, business pages, product feeds, and AI summaries can all influence the next click.

- Follow-up learning: Conversational Search lets people refine a task without starting over.

The assistant habit is already normal. Siri, Alexa, and Google Assistant trained people to ask aloud and expect a direct response. That same pattern is moving into browsers, apps, and SERP features, where people can challenge an answer, ask for alternatives, narrow the scope, or request a next step.

Retained context will change the journey even more. Future systems will connect follow-up questions, inferred goals, prior interactions, location, device signals, and stated preferences. Google AI Mode points in that direction because it carries context across follow-ups and supports open-ended tasks. Early testing found AI Mode queries were twice as long as traditional Google searches because people asked more detailed questions.

Personalization will also deepen. AI will use personalization more deeply in ranking and answer selection. Personalized Search Results will matter most when context changes the best answer:

- Local services and nearby availability

- Shopping comparisons and product fit

- Travel planning and itinerary changes

- Technical troubleshooting and next-step diagnosis

- Research refinement across multiple sessions

Multimodal discovery will push search beyond typed queries. Google AI Mode already accepts voice, text, and uploaded images, so discovery can begin with a photo, a spoken prompt, or a detailed written request. SEO teams should make entities, products, locations, visuals, transcripts, structured data, and video context easy for AI systems to parse, summarize, and cite.

Market momentum is real, but Google still has gravity. GISMA data supports planning for gradual adoption rather than an overnight channel replacement. Nielsen Norman Group also found that Google remains the familiar default for many people. Prepare for dialogue-style, predictive, personalized, and multimodal discovery while continuing to build crawlable, useful, authoritative web content.

AI Impact On Search Algorithms and SEO Visibility FAQs

The Impact of AI on Search Algorithms brings up practical questions for SEO teams. These FAQs frame what you’re likely weighing around visibility, intent signals, AI Overviews, and content strategy before the deeper answers that follow.

1. Do Traditional Ranking Signals Still Matter?

Traditional signals still matter, but AI changes how search systems read them. Keywords, backlinks, and Search Rankings still help, yet they’re filtered through intent fit, semantic clarity, topical authority, engagement, accuracy, and answer usefulness. The old model rewarded exact-match terms, link volume, and top blue-link clicks, while AI visibility adds entity coverage, credible sourcing, structured answers, and reach across SERPs and AI Overviews. Google remains a familiar discovery channel for Americans, so build trust through backlinks and distribution while tracking whether your brand is absent, mentioned, or cited in AI Overviews, AI Mode, and ChatGPT Search.

2. How Does Personalization Shape AI Search?

AI search creates Personalized Search Results through context-aware ranking. Predictive systems then refine results in real time, so a nearby mobile searcher may see local actions while a desktop researcher may see deeper educational sources. Conversational AI can carry context across follow-ups, which means “Which one lives longer?” depends on the earlier cats-versus-dogs comparison. Relevance improves, but privacy risk and SEO measurement get harder because rankings, click-through rates, SERP features, AI answers, and source selections vary by user.

3. Can AI Search Detect Content Manipulation?

Yes. AI search can detect many forms of content manipulation, but it focuses on usefulness, not AI authorship alone. Google’s quality systems, SpamBrain, and AI Overview protections can suppress keyword stuffing, thin pages, spammy layouts, mass AI spam, scraped content, synonymized rewrites, and pages built mainly for site-owner gain. Google has tied low-value AI-generated sites and spam updates to originality and page usefulness. Strong E-E-A-T Content, High-Quality Authoritative Content, and positive engagement signals help prove the page meets intent.

4. How Are Sources Chosen For AI Answers?

AI systems typically choose sources by matching the query intent to passages they can retrieve, verify, and turn into a concise answer. With RAG, Google AI Overviews and other AI-Generated Search Results may weigh top web pages, official sites, Reddit threads, Yelp reviews, and similar sources differently by query type. AI Overviews still favor reliability because core ranking systems, quality signals, spam protections, policies, and confidence checks shape what gets used. For quick definitions, fast facts, and complex questions, pages with direct answers, evidence, structured data, provenance, and claim-level facts are easier for AI systems to extract, quote, and link.

About the author

Yoyao Hsueh

Yoyao Hsueh is the founder of Floyi and TopicalMap.com with over seven years of hands-on SEO experience. He has built topical maps and consulted on content strategies and SEO plans for more than 300 clients. He created Topical Maps Unlocked, a program thousands of SEOs and digital marketers have studied to build topical authority. He works with SEO teams and content leaders who want their sites to become the source traditional and AI search engines trust.

About Floyi

Floyi is a closed loop system for strategic content. It connects brand foundations, audience insights, topical research, maps, briefs, and publishing so every new article builds real topical authority.

See the Floyi workflow